Benchmarking of Research Portfolios

Getting a grip on where one stands in the field, what one’s strengths and weaknesses are with respect to peers and where development opportunities lie is far from trivial for research institutions. Many tools for benchmarking exist, all with their advantages and drawbacks: league tables (especially subject-rankings) provide a direct comparison, but on the basis of often doubtful methodology. Bibliometrics are prone to disciplinary distortions, since publishing and citation practices vary widely from one discipline to the other.

After an important institutional reorganisation, a university wished to assess its positioning considering its new perimeter. We proposed the form of an interactive report featuring spelled out in-depth analysis as well as interactive data visualisations permitting further exploration by the reader.

We started on the macro level drawing on data by CWTS Leiden on 5 macro-fields. (The choice of benchmarks has been altered from the original report for reasons of confidentiality)

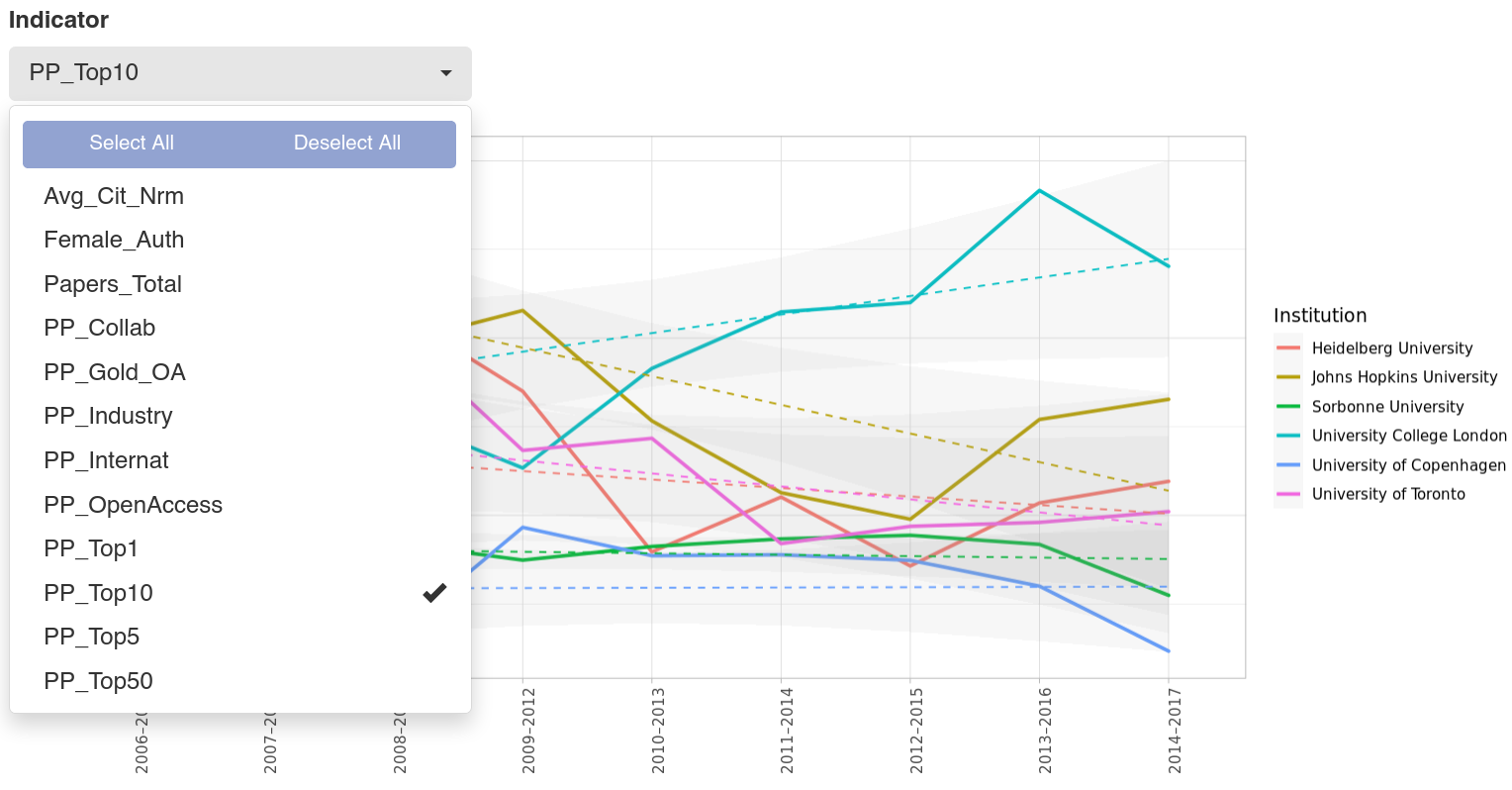

These complex plots depict the position of the benchmark institutions with respect to each other (the colored flags) as well as to the field (the violin and boxplots) on a variety of indicators related to publications and citations issued by the CWTS.

Evolutions can also be studied for the benchmark institutions again using a variety of indicators.

All of these visualisations were highly adaptive. The list of benchmark institutions for the flags as well as the reference baseline for the violin and boxplots depicting the field were entirely open.

This range of visualisations was also used for an analysis of the meso level relying on the Global Ranking of Academic Subjects edited by the publishers of the Shanghai Ranking. This ranking features 54 subjects. For this source, the analysis was complemented in particular on the one hand by an overview layer depicting the position of benchmark institutions within the subjects in 5 macro-fields.

On the other hand, the analysis was accompanied by an in-depth study of the implications of the ranking’s methodology so that we could avoid falling into caveats this type of source is riddled with. For instance, we analysed the de facto weight of each indicator with respect to the position in the league table which differs greatly from the nominal values of the ranking’s methodology.

To grasp the portfolio in more detail, we finally analysed the micro level of production and citation performance. For this we used the 27 subject areas dividing into 334 fields.

To account for differences in publication and citation practices, all areas and fields were compared to the baseline of national or international production. This way, the field is leveled for each discipline to avoid distortions and render especially performance in the Social Sciences more adequately.

A specific element of interest here is the ratio between publications and citations. so that high publishing disciplines do not overshadow the performance of disciplines with a smaller production. This ratio is rendered as the number to the right.

Overall this report combining analysis by field experts with the means to freely explore the available data has helped our client during institutional transition and to deepen the understanding of their portfolio and the position of their faculties and departments with respect to their peers. This is crucial information for institutions seeking to base their answer to questions of strategic positioning and development on empirical evidence.

EXPLORE OTHER CASE STUDIES

Specialization Analysis

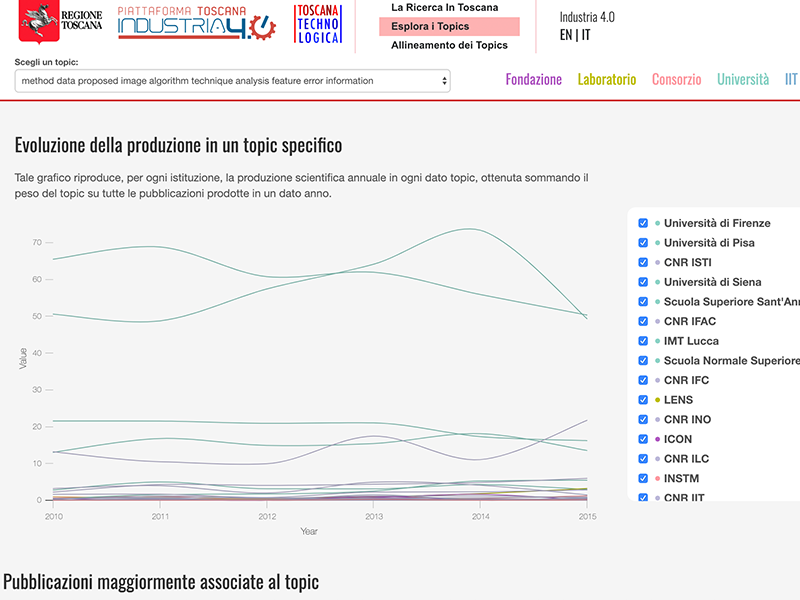

We help institutions to understand better their disciplinary strengths and weaknesses by means of relevant specialization analysis.

Finding partners and understanding the networks in a given area

Regional government, wanting to understand how its S3-related initiatives’ fit within the general Research and Innovation (R&I) panorama and other funded projects.

Competency mapping and research valorization

We support research-policymakers in discovering trends in R&D and in identifying emerging strengths of local research & innovation ecosystems.